Genomes

Genomes is a collaboration between znah (Alexander Mordvintsev) and myself, released in December 2023 on fxhash.

Alexander shares the same interest that I have for the study of artificial life. I have been following his work with a deep interest for many years as it is groundbreaking and foundational in the field. As one may tell, I’m quite a fan of his work. One project of his in particular, Neural Cellular Automata, introduced an exciting new perspective in the field of Cellular Automata.

Web interactive NCA demo

Source: Mordvintsev, et al., "Growing Neural Cellular Automata", Distill, 2020.

Neural Cellular Automata (NCA) are trained to reproduce images using machine learning. The NCA has access to 16 channels, 3 of which are used as the output [R, G, B] channels. Each cell of the grid is updated based on the values of its neighboring cells. The training process aims at minimizing the differences between the [R, G, B] channels and a target pattern.

A single update step of the model.

Source: Mordvintsev, et al., "Growing Neural Cellular Automata", Distill, 2020.

Through this training process, a series of weights is found and will be applied to every cell of the grid when the CA is executed. Interestingly, while each cell shares the same weights, the CA is capable of re-generating large-scale target textures. This paper shows us how simple localized static rules allow for the generation of arbitrary large-scale patterns. Probably akin to what’s happening in biology and the growth of complexe beings from single cells. Fascinating stuff.

While I was discussing with Thefunnyguys (passionated collector of Generative Art, founder of Le Random), I showed him Growing Neural Cellular Automata. He immediately recognised the work of Alexander and linked it to some other work of his published on Hic et Nunc (which I wasn’t aware of!). It then occurred to me that I could invite Alexander to collaborate with me on a project around NCA—and immediately got excited by the idea. I reached out to him and he expressed his interest to work together. I was thrilled.

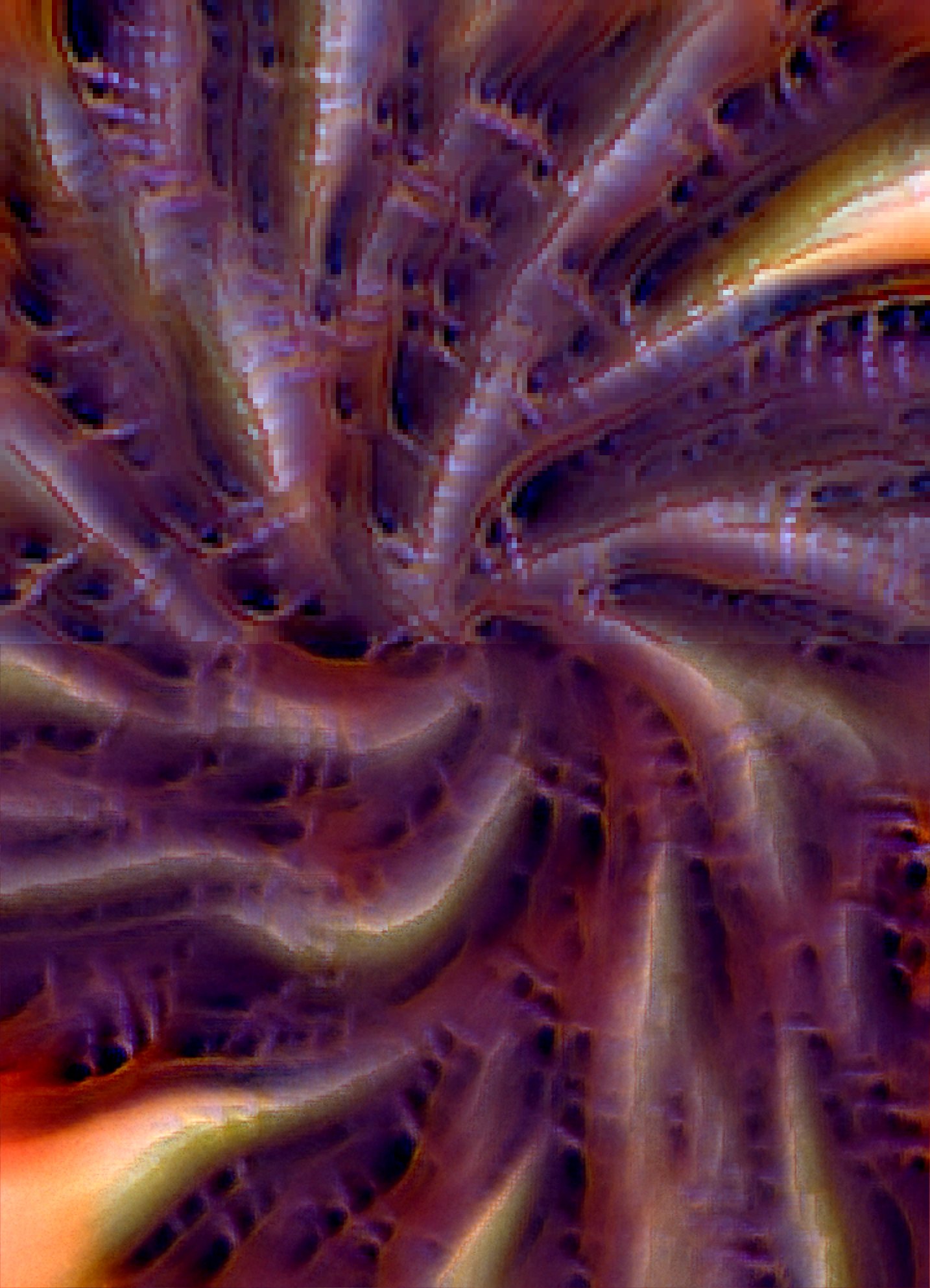

We started discussing and Alexander started to work on some ideas he had for the project. He very quickly came back with some early lifeforms, generated with Isotropic NCA, a variant of NCA where the CA can orient the sensor of each cell independently

Each pattern in the video is a different CA running on all the cells of its environment. In the context of long-form generative art, we needed to find a way to get varying outputs given a random seed. However, in its current state, the NCA could only produce a predefined set of outputs (as many as it’s been trained on). Alexander started exploring breeding (mixing) NCA rules to introduce diversity.

Alexander exploring breeding

I also started to do the same in parallel. I was feeling a bit out my depth though, because of the complexity of the ML pipeline which was handled by Alexander. It took me a while to start getting interesting results.

Screen recording sent to Alexander to share my early results in mixing

In the meantime, Alexander made progress on mixing and was able to get fairly consistent and promising breeding.

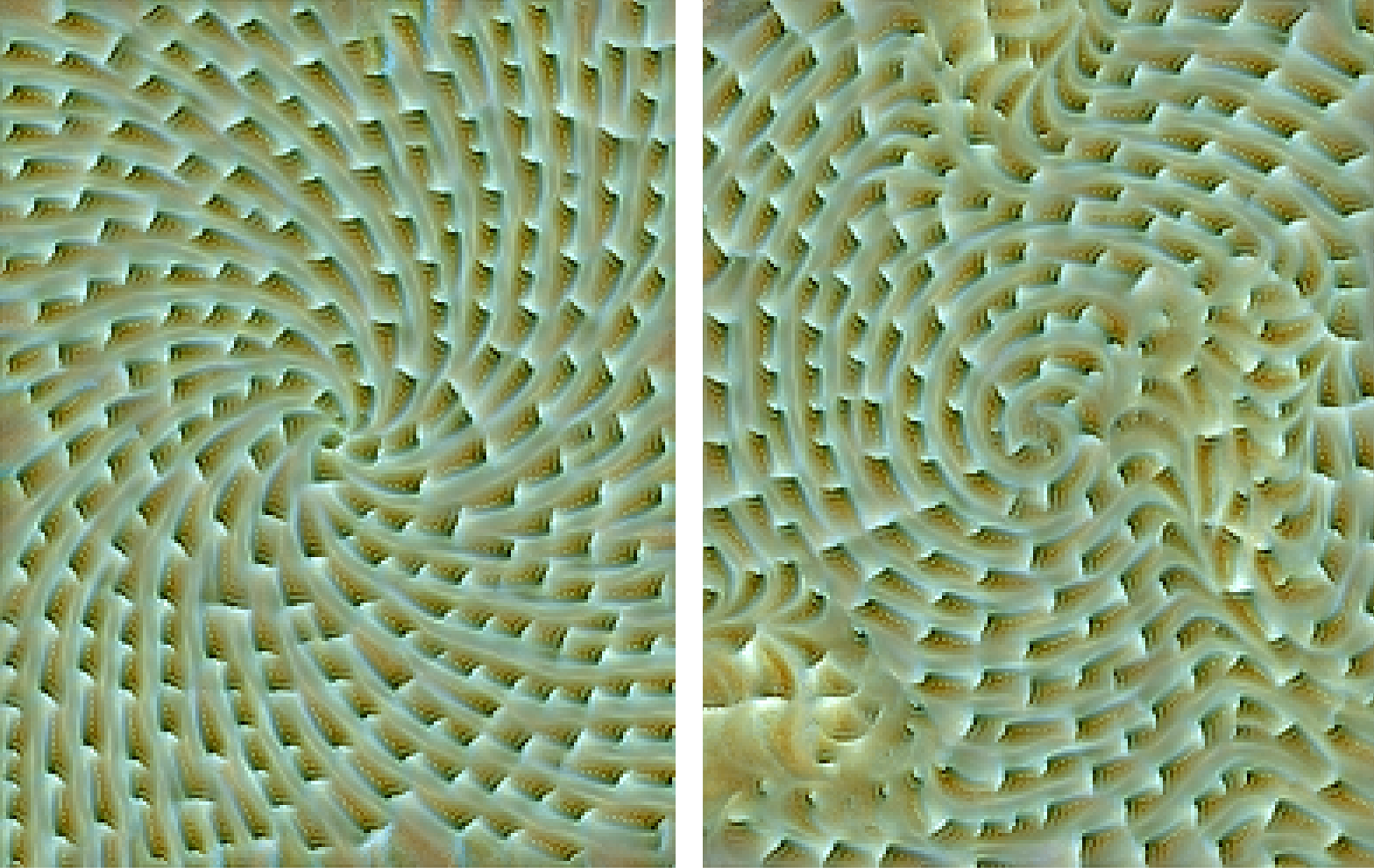

From left to right: Species A, breeding A<>B, Species B

I started exploring field transformations. Outputs were lacking contrast and diversity and I wanted to improve on that front.

Left: without noise, Right: with simplex noise

Outputs looked a bit flat color-wise too, so I worked on some adaptive color-correction to adjust levels and get a better color range.

Left: without correction, Right: with correction

In parallel, Alexander explored projecting the texture on various surfaces.

These outputs were super interesting, and there’s definitely a world where we would have explored this direction. However, we were getting close to the deadline and decided not to pursue this avenue.

At this point I was tinkering with the algorithm and discovered some nice properties when running the CA at multiple scales. I was attempting to get rid of the pixellation (artifact of the CA grid cells) for fun. My first attempt was to run the NCA on the grid once, and then run multiple steps of the NCA on every pixel of the image (1 pixel = 1 cell). To get a sharp result, this required a lot of computational power as the 1 pixel step had to be executed 20+ times per frame. This first attempt was pretty naive but uncovered some pretty outstanding outputs.

The next improvement was pretty intuitive: instead of running the NCA only twice, at initial grid size and pixel size, we could run it recursively at half resolution down to pixel size. This would allow sharpening the result progressively at a smaller cost, while keeping some of the properties of the NCA visible at the different scales.

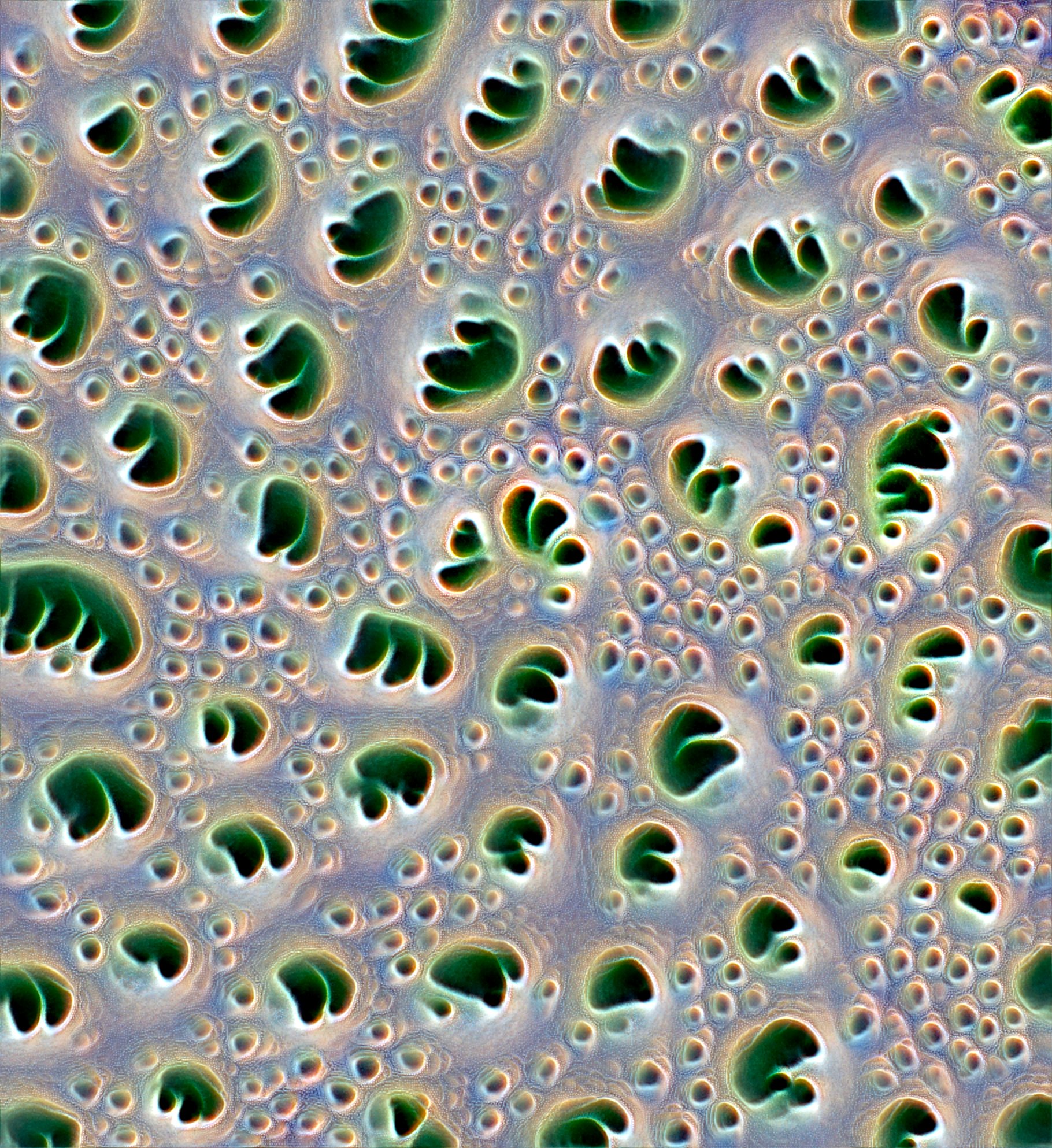

These outputs were absolutely stunning. I was glad to finally bring a meaningful contribution to this project and surprise Alexander with a innovative idea, or so I thought. He mentionned having explored a similar idea which he called NCA fractalization.

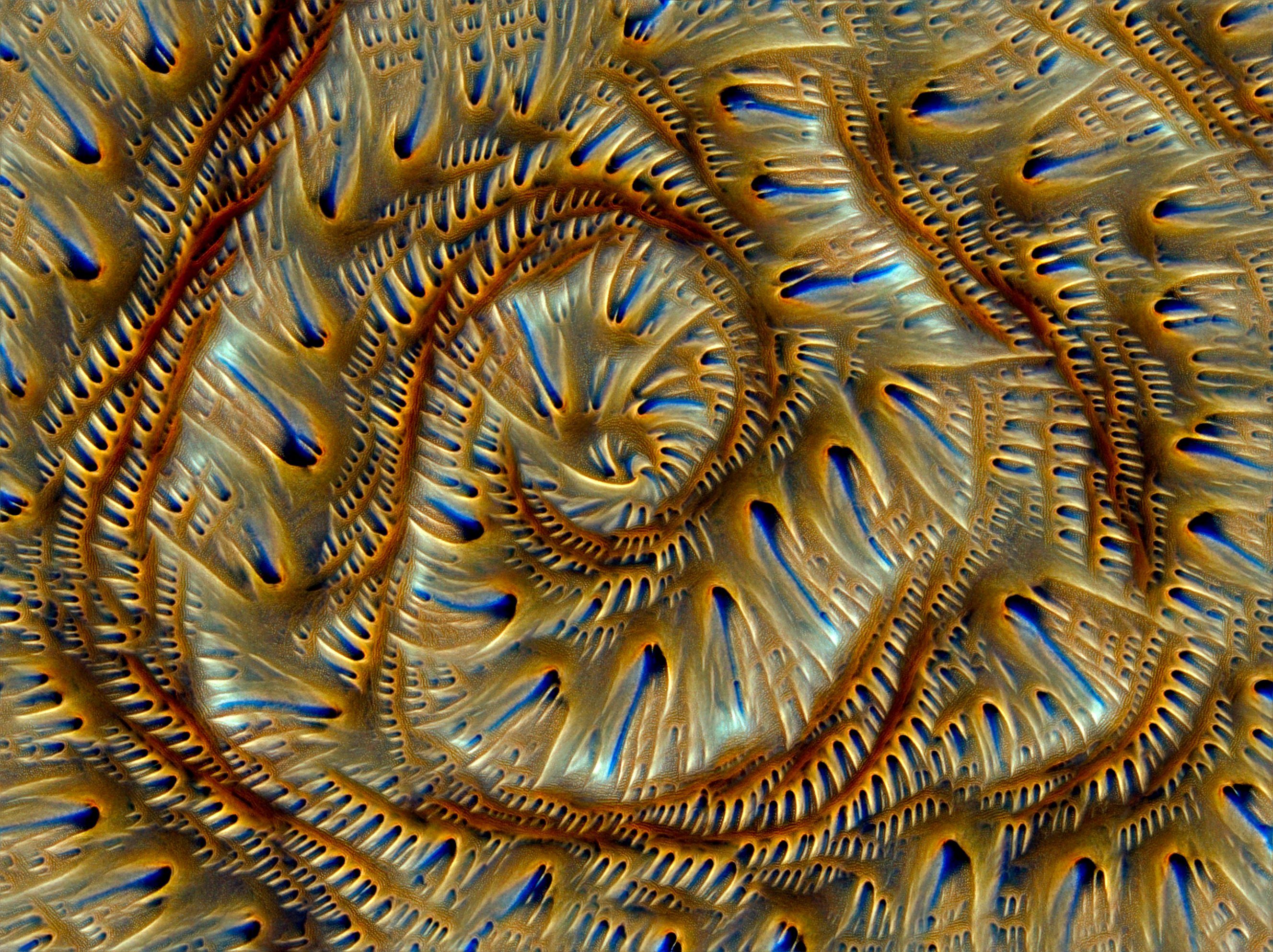

While the joy of bringing a fresh discovery to my collaborator was gone, the fire of refining NCA fractalization in our project was animating me. I improved the algorithm for adapting the number of passes based on the fractal level, which allowed getting better stability. Just like that, we started seeing proper fractals.

Adding fractal scales

At this point the project was in a great shape and we were both happy about it. Outputs were great, diverse, sometimes really powerful. We explored a few more ideas but eventually nothing sticked, and we spent the remaining time cleaning up the code, fixing bugs, auto-purging bad pairs, etc…

We implemented a last idea related to the fractal nature of the algorithm. Instead of setting a fixed number of fractal levels manually, we made it so that the higher the resolution, the more fractal levels are computed. We wanted to algorithm to reveal even more details as time goes and computational power increases. Right now I can manage to render up to 7680x4320, before the 8K texture limit is reached. But we can imagine a future where we can render even deeper…

Check 8K resolution at full resolution here

I will finally leave you with a few minted iterations I particularly enjoy.

As a reminder, you can explore the whole collection on fxhash !